This interview is a transcription of the podcast, Steve Krug on Mobile Recording and Usability Testing.

Nancy Aldrich-Ruenzel: I’m here today with rockstar and usability expert Steve Krug. Welcome, Steve.

Steve Krug: Hi. Nice to see you, Nancy.

Nancy: Steve, as you all know, has written the wild bestseller Don’t Make Me Think and the companion title, Rocket Surgery. And one of the questions I asked Steve earlier when he stopped by our studio here in San Francisco today was, “What’s going on with mobile?” You can tell us a lot about how one could usability test in the mobile area. So, I’d like to concentrate on that, if you don’t mind.

Steve: Sure, it’s what people are interested in at the moment, since only a half dozen people still have desktops (laughter). I love reading those statistics. It keeps crawling back when there are going to be no desktops left.

Nancy: Exactly.

Steve: It’s true.

Nancy: So what’s the difference between doing usability testing on a desktop versus mobile?

Steve: For a long time I said it was – and it’s still true, I think – that it mostly had to do with how you either record the test or how you display it to observers, because of the platforms. Over the last 15 years, we’ve evolved stuff that make that very easy. So if you’re working on a desktop, laptop, PC or Mac, there are a lot of tools for doing that to share with people in observation rooms. It used to be you needed the two-way mirror so that you could see what was going on, and then they’d have what you call a screen converter connected to the output of the PC that would convert into a TV signal, like a low-res TV signal. I don’t know if you’ve ever watched those – they were terrible.

But now, we’ve got screen-sharing: GoToMeeting and WebEx… all that stuff. So basically you can take the signal the person is watching on the test laptop or desktop and get a perfect copy of it back in an observation room or anyplace or and to remote viewers at the same time, which is really nice. And, if you use Voice over IP – which I recommend, like WebEx and GoToMeeting have – you get great audio out to everybody, too, for like $49 a month or whatever they charge for their service, and there are free ones.

Nancy: Unlike the hundreds of thousands of dollars one might have spent in the past.

Steve: Well, you have to get a scan converter – that’s what they were called – and you could basically take a 640 x 480, which is what screens were at the time, believe it or not, and make it into a really horrible TV picture, so you could barely read anything.

So that stuff works great. So the problem of how do we get the signal that the participant is looking at to the observer so they can follow along has been solved for quite awhile, cost-effectively and efficiently. At the same time, if you want to record the thing, for posterity or just so can look at it later or so you can create clips to distribute to people, that’s been solved for a long time. Things like Camtasia. For 10 years now, Camtasia has been to the point where it will run in the background on any PC or laptop without being a resource hog and create fairly effective, efficient file-sized files, so you can record an hour of video and audio and put it on a CD. Nobody has CDs anymore! (laughter) They think it is going to disappear faster and faster. We used to have to move all our media to another media every five years or eight years or something. I guess it’s all in the clouds.

Anyway, so that problem got solved. It was really easy to make a recording of the stuff, so you had a recording. Well, the problem is once you move to mobile, not much of that works anymore. In terms of recording, Camtasia runs in the background on PCs and Macs, but you actually can’t run background apps like that, particularly on the iPhone, and it turns out even on the Android, because Steve Jobs didn’t want us to do two things at once, for some reason. And so, until recently, there haven’t been any screen recorders. There still aren’t really. There are a couple of companies that have them, where they did a trick where instead of having something that would run in the background, they actually built the screen recorder into a web browser. So you can basically do web browsing and create a recording that goes to your camera folder.

Nancy: What’s that called?

Steve: There’s two of them. One is called Magitest and the other is UX Recorder. They are both iPhone apps, and they’re really good at what they do. They are particularly good if you’re going to Starbucks and you have to hand somebody the phone to do, like, a five-minute test. Then you can have that running while they do it in the background.

Nancy: Fantastic. So you can literally walk out of your office door, go downstairs – we have a Starbucks downstairs here – and do a user test real-time.

Steve: Oh yeah. People do a lot of Starbucks tests now, because if what you’re testing is something where the audience is the kind of people who would be in Starbucks, or a general consumer population, then you can sit there with a stack of Starbucks cards and a little sign that says, “Ask me how to get a Starbucks card” or whatever (laughter). And you can do like 10 people in 2 hours to get your testing done. I’m kind of waiting for Starbucks to monetize this somehow for themselves.

Nancy: Yeah, rent out hoteling spots for user testing. (laughter)

Steve: But no, a lot of people do that. It’s one of the most popular ways to do mobile device usability testing, in fact, because you solve the recruiting problem out of the box.

Nancy: So, that handles recording.

Steve: That doesn’t really handle recording in a sense because most of the time what you want to test is web apps. Right? It’s iPhone apps or Android apps. And those recorders will only work with URLs because it’s attached to the web browser. So you can’t run your app and create a recording.

Nancy: Right, not simultaneously.

Steve: And it gets kind of complicated. Usually what you have to do is you have to find some way to get the signal from the device to, like, a laptop, and then display it on a laptop, and then you run it on something like Camtasia on the laptop. And that way you can create the recording.

So then the problem becomes, “How do you get the signal from the device to the laptop?” And then you get into all kinds of strange things.

Nancy: And also the display issue, right?

Steve: What you’re displaying to the observer, that’s Part B. We’re still on Part A. (laughter) It is complicated. I have my own theories now about optimal ways to do this. The same way in Rocket Surgery, I tried to just figure out what do I think is THE best – not perfect, but most suitable for most people – way to do this stuff and then recommend that. So I’m almost at the point where I can do that for mobile testing.

So how do you get the image that this person is looking at to a laptop? Because when you get to the laptop, then actually both problems are solved. If you get it to the laptop, then you just run GoToMeeting on the laptop, and that sends it back to the conference room. You run Camtasia on the laptop, and it gives you a recording. So then the issue becomes, how do you make that connection?

There are a bunch of ways. One that, surprisingly, not that many people want to go and talk about and not many people know about is AirPlay. If you’re on the iPhone, AirPlay is built into the iPhone, so you basically need a program running on the PC or Mac called Reflector that fortunately only costs like $15. What it does is it acts as an AirPlay receiver. Usually, AirPlay you can receive on an Apple TV. I guess that’s what it’s made for. The Reflector runs on a Mac or PC and makes it into a receiver. All you do is you run Reflector on the Mac or PC, you have the device on the same Wi-Fi network, then you go into the iPhone, you hit the Home button three times - and click your heels three times (laughter) – and it would take you to where you control audio, right, from the bottom. There’s another icon there, if AirPlay is running nearby, and you click on that icon, and it says “Do you want to connect to this laptop?” All of a sudden, the image of what’s on your iPhone appears on the screen, on the Mac. It’s great – it’s really pretty cool.

So that’s one way to do it, but that doesn’t get you audio. (laughter)

Nancy: It gets you part of the way.

Steve: It gets you part of the way, and then you can basically have a microphone sitting in front of the person. The other general fork in the road there is we’ll take a picture of the whole thing. So you’ve got a camera of some form, and that takes two forms. One is you’ve got a stationary camera—there are these document cameras, and there’s a great one (I forget what the name is, but it’s cheap—it’s like $69) on a little arm, and you put it here, and you tell the person, “Okay, don’t move the iPhone out from under the camera,” which is easy if you have a tablet, you put the tablet on the desk. Basically, what people often do is they put tape and say, “Don’t move it outside of there.” So then you’ve got the camera looking down, and the camera is a USB camera that just plugs in to the laptop, then you use Camtasia or GoToMeeting or whatever. So that’s a problem solved for video that way.

The other is the sled approach, which is, get a flat piece of something – metal or aluminum, or plexiglass – and have it bend up, and then you would attach a webcam up here that’s pointing down at the device. That way, they can move the device. They can hold it and move it, because the camera is moving with it.

Nancy: That’s a good solution.

Steve: It is a good solution. The main problem with them is they tend to be kind of unwieldy because they tend to double the weight.

Nancy: And then it’s uncomfortable for the user.

Steve: It can be. People think it’s uncomfortable, or whatever. On the other hand, it works really well, because the webcam can have a microphone built into it. You’ve got audio and video all set going into the thing, and the microphone is pointing at them.

The other difference is that when you use the AirPlay solution, you’re not seeing the fingers. You’re not seeing the gestures. You lose the fingers. It turns out that if you’re just watching what’s happening on the screen and not seeing the fingers, it can be really hard to follow, because stuff moves so fast. The screens are really small, so there’s not much on them. So you tend to go like from one screen to another quickly. You don’t necessarily know where the person scrolled or clicked or whatever. You lose a lot.

With a camera, you don’t, actually. The only danger with a camera is that the person’s hand is going to be in the way of the camera, but it turns out not to, because we’ve got to see the screen, too, so we tend to keep our hands out of the way. So that turns out not to be a problem.

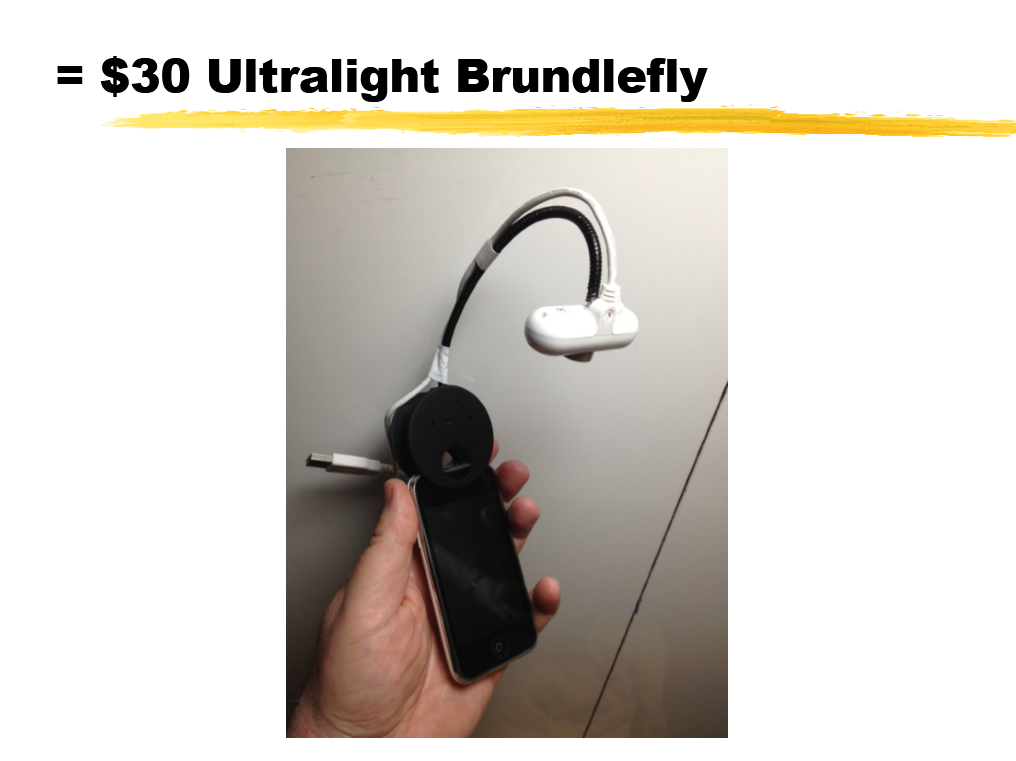

My bottom line is that the best solution is a really lightweight sled approach. I got curious about it and started to say, “Why doesn’t this exist” and started tinkering and looking around. I researched every webcam currently in production (which there aren’t that many) and found one that actually has all the characteristics I was looking for. It’s very small; it’s the size of a lipstick, more or less. It’s very lightweight – it weighs nothing. And it’s very cheap. It’s like $20 from Amazon.

And then I figured out, “How do you attach this to the device so that it’s sort of easy to attach and detach?” And I found a booklight that has a plastic clamp that’s secure and has a gooseneck coming up from it. I just cut the booklight lamp off of it and put a screw through the webcam into the gooseneck. And I really like it. I’ve got to put it on my site. For $30, you can make your own, and it works really well. I’ve shown it a couple times at workshops and people are like, “Will you make me one?” (laughter)

Nancy: Maybe we can talk you into showing it to viewers right now.

Steve: We could do a video! (laughter)

Nancy: That’s fantastic – great.

Steve: So I think those are the right solutions for getting the stuff back into the conference room. Either a document camera, if that’s what you have to do, or a really lightweight unobtrusive webcam that moves with it. What you do is you send it to, like, you’re doing the test and you’re there with your iPhone and I’m here. The other issue is that how do I as the facilitator see what you’re doing? You really don’t want me hanging over your shoulder. So what you do is the signal goes to my laptop which is right there in front of me, so I can watch and make sure everything is still in focus and I can see exactly what’s going on and then cue you to tell me what you’re thinking about. And then it goes from there back to the conference room.

People have done a lot of really great blog posts about solutions for this stuff, but none of them ever really seemed quite right or complete.

Nancy: Now we have a recipe for success! Thank you very much, Steve. That’s fantastic.

Steve, thank you so much for coming by the studio today, and folks watching, I can only highly recommend getting Steve’s Rocket Surgery book, and keeping with the practical tips you gave today, Rocket Surgery has lots of practical tips about doing your own usability on a shoestring. You don’t have to spend a lot of money. I’m sure you all know about Don’t Make Me Think, but I recommend both books highly. Thank you, Steve.

Steve: Always nice to see you.

Nancy: Good to see you.