The Immersive Experience

- The world in 3D

- Affordances

- Multimodal experiences

- Experience design

In this sample chapter from Designing Immersive 3D Experiences, author Renée Stevens breaks down the world of immersive experience.

In this chapter we are exploring the components of the overall immersive experience. We will start off by breaking down how to make 3D shapes, then we will make those shapes user friendly and easy to interact with, and finally we will look at how those interactions can engage our full sensory system. Here is what we will be covering:

THE WORLD IN 3D By breaking the complex down into smaller pieces and looking at additive and subtractive methods, we’ll see that creating 3D models is actually more elementary than you may have thought.

AFFORDANCES How we interact with physical objects in our real world can and should inspire how we interact with digital objects in a virtual world.

MULTIMODAL EXPERIENCES With so many different ways for a user to experience XR, it makes sense to engage the user in as many modes as they are used to in their physical world.

The world in 3D

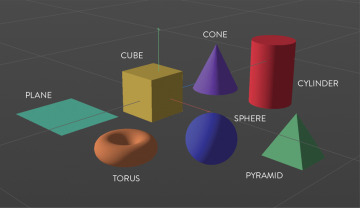

You are already an expert in three-dimensional design. I can tell you this confidently, because you live in it. Because of this, you have a deeper understanding of 3D than you do of 2D. Most designers have to train themselves in how to make a flat icon, how to take something that is complex and simplify it to simple geometric shapes. Of course, this doesn’t change the fact that it is hard to create something in three dimensions using a computer that has only two. Our understanding of 3D space through experience will allow us to know if we are designing for it correctly. Though there will be some challenges along the way, if you break them down, the process becomes more approachable. To start, shapes remain the essence of objects, and in 3D we work with the same shapes you are used to, but they become volumetric. A square becomes a cube. A circle becomes a sphere. A line becomes a cylinder. These are your primitive shapes (FIGURE 3.1).

FIGURE 3.1 Primitives. The building blocks of 3D models start with these basic volumetric shapes.

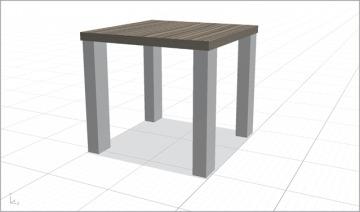

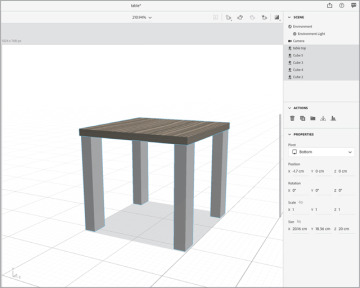

You can use these basic 3D shapes to build other shapes, just as you have probably done in your 2D practices. The use of additive and subtractive techniques can help you make more complex shapes more easily. The table in FIGURE 3.2 was created out of cubes, and only cubes. How many cubes do you think it used? 5? 6? 280? Any of these answers could be correct, and the number could be even higher. It all depends on how you build it and how you subdivide it for ultimate complexity and control of the details. When you view a 3D form in its entirety, it may seem complex, but at its core, it’s made of the familiar basic shapes (FIGURE 3.3).

FIGURE 3.2 Cubical Table. This table was created using only cubes inside of Adobe Dimension. How many cubes can you identify?

FIGURE 3.3 Cubical Table Breakdown. This table was created from five cubes, one for the top (named table top) and one for each of the table legs (named Cube 2, Cube 3, and so on). In this example, the table was created using the least number of shapes; however, the legs could have been created by stacking multiple cubes on top of one another if more individual breakdowns had been needed within the structure of the model.

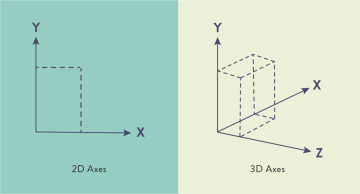

Primitive shapes are defined using a three-dimensional coordinate system that represents width, height, and depth. Locations in the system are represented through x, y, and z coordinates, both positive and negative. The x-axis is horizontal with coordinate values increasing from left to right. The y-axis is vertical with coordinates moving up (increasing) and down (decreasing). Two-dimensional images include those two axes only, but in 3D the addition of the z-axis adds coordinates indicating depth: forward and back (FIGURE 3.4). This also includes the use of volume as it extrudes a shape in all directions, including z-space. All together the three axes are called the coordinate axes.

FIGURE 3.4 2D and 3D Axes. The visual difference between 2D using x and y coordinates and 3D using x, y, and z coordinates.

As you are designing for 3D you will need to start to understand some of the additional properties of a model. Instead of just working with the fill and stroke of a shape, the attributes expand to designing an entire scene. This process has similarities to photography in many ways. You need a scene, you need a camera that you can move around to capture the shot, you need light, and you need to consider the overall composition. After capturing a photograph, you need to process it—often by moving the image file from the memory card inside the camera to your computer. The equivalent in 3D is the rendering process, which creates the final file for use in an illustration, animation, or an XR experience. Because some of the terms in this process might be new, let’s look at each of them one by one.

Splines

In addition to primitives, you can use custom paths which allow you to create organic shapes and then make them volumetric. Most 3D programs create curves using splines. If you have worked in Adobe After Effects, these are the equivalent of paths. The process starts with creating the spline and adding extrusion to generate geometry. This results in custom 3D shapes. This concept might be familiar if you relate it to using the Pen tool to create Bézier curves, which you may know from Adobe Illustrator or other vector-based programs. For many designers who are comfortable with the Pen tool, working with anchors and paths, and adjusting the angle of a curve with each anchor point, creating and extruding splines will be a comfortable space in the beginning. In fact, some 3D programs, such as Cinema 4D (Maxon) allow you to import paths directly from Adobe Illustrator.

Combining splines with various generator methods enables you to expand what you create beyond the basic geometric shapes (FIGURE 3.5). I highly suggest getting comfortable using all the primitive shapes first, however. Once you can start to see how you can use the foundational primitives to add more complex shapes, then you might be ready to try using splines.

FIGURE 3.5 Generating Geometry. Four generators that can be used to create geometry from spline paths.

Mesh

Working with 3D shapes might be a trip back to your elementary geometry classes. But the good news is that they haven’t changed since then, so what you recall about those days is still quite relevant. 3D shapes are made up of points, edges, and faces. It is the relationship of all those elements collectively that make a mesh. This mesh is the visible representation of the 3D form. For example, you can create a 3D mesh of a cube or a sphere.

The relationship between the elements of the mesh help define the shape and the overall form. The point where two or more edges meet is called a vertex. When you have more than one, you call them vertices (FIGURE 3.6).

FIGURE 3.6 Vertices. Highlighting the seven vertices that can be seen on this 3D cube.

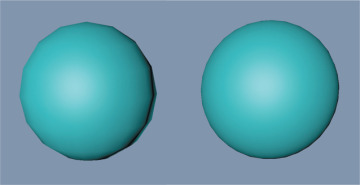

When multiple points and edges close a complete shape, they create a polygon. Triangles, squares, rectangles, and pentagons are all examples of polygons. Multiple polygons help form the full, complete 3D shape. If you are making a cube, then each polygon you create would be a square. So, to create the full cube, you would have six polygons (that are squares) in total. As you increase the complexity of shapes, you will also increase the number of polygons needed to create each one. However, the number of polygons that’s advisable to use depends on where you will be using your model. The more polygons you have, the smoother your model will appear, so it would make sense to add as many as you need to make it look the best.

The tradeoff to remember, however, is that high polygon counts require a lot of processing power to manipulate. In other words, the more polygons you use, the greater the chance of negatively impacting the speed and quality of the model’s loading time (FIGURE 3.7). This is even more important in XR. If you are creating an animation for a film, then you want the highest quality images because the playback won’t be affected as much. In XR, however, the processing power is limited, especially in mobile AR and WebAR where the experience typically relies on the processors in your phone. So, the more efficient you can make your shape, using as few polygons as needed, the faster the load time of your XR experience and the more optimized the performance will be for the user. If your file size is too large, the object may never even show up—often there is no warning provided as to why. To help you avoid this frustration, it is better to think efficiently from the beginning. Just as an image that has not been optimized for the web can cause page loading failures and delays, the same is true for 3D models in AR.

FIGURE 3.7 Polygons. The left sphere has 16 segments; the right sphere has 31 segments. The more segments, the more polygons and the smoother the edges of the shape. However, the more polygons, the larger the file size too.

Material

Once you have a mesh created, you likely have a bunch of gray shapes, looking like clay. It will not look too exciting yet—not until you start to create more physical qualities to it. The first place to start is with materials. These are all the physical properties that you add over the full mesh. If you think of your mesh as the structure, or the body, then the materials are like the clothes or upholstery that you drape over it and that take on the same shape as the structure. Materials are essentially the properties that you can add on top of the object that will determine how it looks. Just like materials in the real world, these digital materials determine how light interacts with the different surfaces, how the color looks, and what the texture looks like. Materials can be transparent, opaque, or reflective, for example.

Materials that include a texture are a bit different from your standard material, because they use an image wrapped around the skin of a 3D object. You can apply and layer multiple materials such as wood, brick, or concrete, just to name a few. However, you can apply only one texture. Textures work only when they are added to a material. So, you can’t have a texture without defining how that texture will be seen on an object.

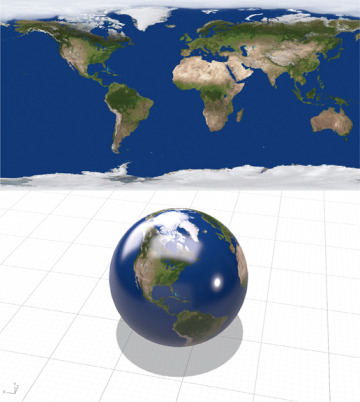

Another way to add an image to the surface of your model is through the use of a UV map. This flattens a 3D model’s x, y, and z coordinates into a 2D tile. The name UV doesn’t stand for anything, but is instead used methodically. The x-axis translates to the U, the y-axis translates to the V, and then some maps also use the z-axis, or depth, represented as W.

Ordered alphabetically, the use of the letters U, V, and W is to create a distinction between the use of x, y, and z for coordinates. These UV maps are flattened 2D representations of 3D space. You can use an image, such as a design or a photo of Earth, and wrap it around the shape of the mesh, such as a sphere (FIGURE 3.8). This will make a sphere look like the Earth. Similarly, you can use image textures to create fast mockups of products to see how a design will look on a 3D object. For designers, it is common practice to create these UV maps in Adobe Photoshop, Adobe Illustrator, or similar applications, and then import the final image to wrap around the object using a 3D program.

FIGURE 3.8 UV Mapping. The top image shows the 2D UV map, and the bottom image shows that image wrapped around the 3D model to illustrate Earth.

To take more control of the appearance of your model, you can also add shader information to a material. This holds data about the object’s appearance. As the name implies, shaders can change the appearance of darks and lights using gradients, or even by creating custom shading. These can also be used to create effects that make the object look glitchy, electric powered, or even change colors. Once you start getting more comfortable working with your 3D models, materials, and textures, you can venture into the use of shaders to get a more custom or stylized look to your models.

Camera

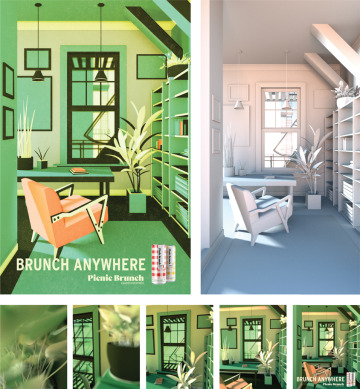

Your eyes are a camera looking at the scenes in your physical world. If you start to think like that, then the idea of the camera view is in essence where you are looking within a provided space. In video or film you have control where each person is looking when. This is not true when working with an individual 3D model, but you can set up a fixed viewpoint that determines how people will see an object. You set the perspective, which will be how people will first see the model when they place it onto a physical object using AR. There is an option to create a fixed view using a camera to ensure that users always see the object a certain way no matter where the user looks or moves. If you don’t have a fixed view, then a user may have full control to view the object from all angles as they choose. You can think of the camera as the viewpoint to an object or a scene. Varying camera views can be seen across the bottom of FIGURE 3.9.

FIGURE 3.9 Picnic Brunch. 3D software was used to create a printed poster advertisement, as well as a short animation for promotion of Picnic Brunch on Instagram. The goal of the project was to highlight different settings where one could brunch, other than a fancy restaurant (hence “Brunch Anywhere”). When COVID-19 hit, everything shut down, so places like apartments and balconies became the focus of the campaign. The image on the top left shows the completed rendered image. The top right shows the full scene before materials and textures were added. The bottom thumbnails show the varying camera views throughout the 3D scene.

Designer, illustrator, and animator: Jared Schafer; Creative director: Paul Engel; Executive producer: Brendan Casey

Light

Light is the essence of how you visually experience the world. To see any object or scene you create, you need to have some light on it. You can determine the qualities and characteristics of the light you add. As you need light to make a photograph, you need light to see and understand a 3D scene.

In XR, light becomes an essential part of the scene. It isn’t just that the light can brighten some areas and darken others, but rather that it feels like it belongs in that space. This is such an important topic that we will be exploring it more in Chapter 11, “Color for XR.” But for the moment, look around you, wherever you are, and whatever time of day it is. Look for shadows. Notice where they are, what direction they go, how dark they are. If you were to add another object into that same space, it would need to have a shadow that mimicked the others to appear realistic. Just as with a photo illustration, where you make selections from multiple photographs and composite them into one image, you need to create a universal light source. Each element should have shadows in similar directions based on the placement of the perceived placement of the light.

The good news is that there is some great computer vision technology that you can use to assist with this. However, you still need to know how to create multiple lighting setups. This includes understanding environmental versus directional lights. Sunlight creates stronger and deeper shadows than an artificial directional light in a scene. We know this from experiencing light; the next challenge is re-creating it in a digital environment. We will explore much more about lighting and break down the different kinds of lighting, both default and manual options, in Chapter 11.

Scene

When you create for 3D, you are designing a full scene. This may feel like a lot during your transition from designing for a single paper or screen to designing an entire scene. So, just like everything else, do this gradually. Work on creating a single primitive first. Then work on creating more complex objects using only primitives, after which you can explore creating more organic shapes. From there, you can add lighting to mimic the kind of space you are envisioning. At this point, you will have a lot of gray shapes that may be well lit but not too exciting. Take it to the next step by adding materials and textures. Once you have all of these set, you can start looking at the bigger picture. This includes the camera and the environment.

The environment is the space that surrounds a 3D object and contains the lighting and ground plane. In the case of VR, the environment will be a key component to creating the digital space people will walk through. When you think of the environment, think of creating a diorama (FIGURE 3.10). As you may recall from your childhood school projects, a diorama is a miniature replica of a larger 3D scene. They are very helpful for making a visual prototype of a scene. In Chapter 5, “Creating the Prototype,” we will be talking all about prototyping and its benefits for the design process. As you think of designing the environment, it may help you to picture a small box scene, as you would make for a diorama. At a minimum you need to have a floor. This is where all the objects will be anchored. Even if you are creating only one 3D model, you still need to identify the location of the floor, which is shown by the value of the x-axis, the horizontal coordinate. If you would like something to touch the ground, then you need to position it accordingly. If you would like it to float above the ground, then you need to adjust your y-axis coordinate to lift the object up. The ground location will help set the relationship of the object itself to its environment. The visual feedback that confirms this will be the placement of the shadows created by the lighting and where the shadows fall. All the different elements are coming together.

FIGURE 3.10 Diorama. Top-view of fourth-grader’s wetland diorama science project.

Photographer: Ira Susana for Shutterstock

For AR, you may only need to identify this ground plane and the object. However, for VR, you may need to design the full space. This could include a ceiling or a sky and possibly walls. You can create these with flat images or through the layering of more 3D models to develop the full space. Remember the diorama; you want to create all the different elements to re-create the space using your x, y, and z coordinates. Each element should be oriented in the space just as it appears in the miniature replica.

This is where you can lean on your skills in composition and layout. Finding a visual balance in 3D space will take some time and really lots of trial and error. The more you design spatial compositions, the more you will see how you can lean into your skills: your understanding of visual weight and balance from other design mediums. We will be exploring this in depth as we discuss creating a hierarchy and understanding the theories of perception later in the book.

Just to recap, your full 3D scene includes:

Objects with materials and textures

Environment

Lighting

Camera(s)

When you have all of these ready to go, then you can move to the final stage of rendering.

Render

When you are working within 3D software, it is important to know that what you are looking at as you work may not exactly match the final version. Once you create your full model and scene, then you need to render it. 3D rendering is the process of interpreting your 3D content to a form you can share with your audience (FIGURE 3.11). While many programs have render previews, these often are not high quality and as a result don’t show an accurate visual of the materials and lights. It isn’t until you do a full render, that you, in essence, export your final model or scene. Some programs offer an option to do a lower resolution render, which I would recommend doing to check the result of major edits and changes in lights, materials, and environmental decisions.

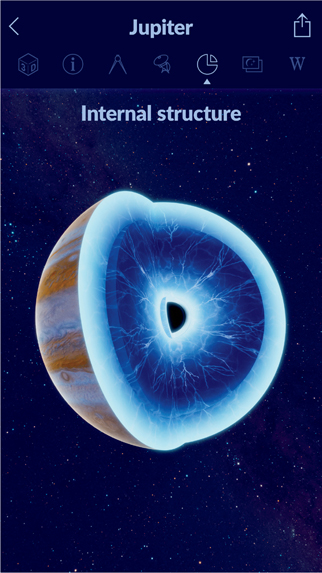

FIGURE 3.11 The Internal Structure of Jupiter. The fully rendered 3D model within the Star Walk 2 app.

Designer: Vito Technology

With AR and MR, the need for rendering has greatly increased, as models need to be seen changing in real time in the user’s experience. This has pushed programs to use a real-time rendering engine. Unity and Blender both offer this option. Blender is free and open-source 3D software that uses an EEVEE real-time renderer. Unity offers a Sprite Render that allows you optimize for different software. For a higher quality, there is high-definition render pipeline (HRDP), and specifically for mobile use there is a lightweight render pipeline (LWRP). Vectary provides a real-time rendering process within a web-based program. This is called physically based rendering (PBR), which computes lights, shadows, and reflections in real time. It is reliant on your internet speed and connection to work, as it is a web program.

Other popular 3D modeling programs for designers are Cinema 4D and Adobe Dimension. The one nice thing about Adobe Dimension is that you can work with Adobe Photoshop and Illustrator to create your own custom models from 2D shapes. Rendering from Adobe Dimension also allows you to select a Photoshop export instead of just the 3D model.

3D files

The purpose of a 3D file is to store all the information about the 3D models, including the overall shape geometry. Some 3D file types include only the data as it relates to the shape itself, while others also include properties, such as materials, scene elements, and animations. Because each of these properties adds to the complexity of the model, they also increase the file size.

With XR, file size is an important issue that you need to pay attention to. Depending on the device you are designing for, the file size limitations may change. For mobile AR and WebAR, you need to optimize the object to reduce how much processing of the image is needed on a mobile phone. We have talked about how more complexity needs more processing, which needs more power, which creates heat. Because you can’t have an HMD that produces a lot of heat while it’s worn on the face, you must be thoughtful of how much processing is needed to display your designed models.

The best way to reduce file size is to reduce your polygon count. You are trying to achieve a balance between the appearance of the model and the performance. More complex shapes will affect the load time and possibly slow down the experience for the user. If your computer can’t process the full file, it will often glitch or even crash the program, which can be frustrating to you and if you are working with a developer. This can be even worse for the users. So, as you build, you need to plan for the technology you want to use. It is fun to play around with materials and shaders, but you don’t want them to interfere with the overall goal of the experience by increasing file size and reducing performance. VR experiences that require tethering to a high-powered PC allow you to off-load this processing, but as VR becomes more mobile as well, the same considerations will apply.

There are a number of file formats that you can use in the 3D world (FIGURE 3.12). The kind of format you end up using is reliant on what software you work with. It is important to know which file format you will need to add a model to your XR experience and then work backward from there. Each program will render different file formats, so you could choose your 3D program accordingly, or you could use a file convertor. If your file isn’t too large, you could try a web-based format converter, such as meshconvert.com. To convert larger, more complex files, you will need a desktop application, such as Spin 3D (NCH Software). File conversion will add more time to your process, so account for that if you plan on needing to convert files based on the software you choose.

FIGURE 3.12 3D File Formats. The most commonly used 3D file formats broken down.

How do you choose the best file format with so many choices? Well, the loading speed and quality or resolution capabilities are your best bet, especially for mobile AR, where the current processing capabilities are still pretty limited. If you are designing for iOS using ARKit, it is encouraged that you use a USDZ format. This is just one example of why there are so many different file formats for 3D, as the industry is divided based on the other companies they currently support or are connected to in some way.

One way to speed up your creation of a 3D assets is to purchase a license to use one that is already made. These are some places you can purchase and download 3D models and textures:

TurboSquid

Sketchfab

Google Poly

Adobe Creative Cloud and Mixamo

Unity Asset Store

As you explore these options, you will see that you can apply filters to narrow your search based on the program you are using to create and render your models or on the file format you are looking for.