- The world in 3D

- Affordances

- Multimodal experiences

- Experience design

Multimodal experiences

What was one of your biggest fears as a child? According to the Bradley Hospital in Providence, Rhode Island, whose expertise is in mental healthcare for children, the second most common fear is the fear of the dark. Number one is monsters. Both connect to a fear of the unknown and trying to determine what is real and what isn’t. Why is one of the most common fears, especially for children, being afraid of the dark? What about the dark makes things scarier?

A lot of the research discusses the connection to separation anxiety; being separated from their caregiver makes the child more on edge, with the added factor of the darkness. A room is the same in the day and night. The difference is that the child can’t easily see their full environment in the dark, and their fear of the unknown, because their sight is limited, becomes heightened. All of this fear comes from a change in how and what they can see. If a child can’t see what is around a corner, under a bed, or in a closet, then their imagination will come up with a story. The fears of a child who is blind may not be as focused on what they see, even if they can sense darkness and light. They may have more anxiety when other senses are restricted in some way. If they can’t hear what is happening around them, either because of sound being restricted or a loud sound overpowering all other sounds, then this may cause a similar sense of fear.

Our senses have a deep impact on our experiences. We are so used to them that we may not even realize how much we rely on them. It is when one of our senses is limited in some way that it creates a break in our equilibrium, and it causes fear. Although we often think of our senses as separate, they are actually highly connected and reliant on one another. We are so accustomed to how our senses work in harmony together, such as what we see, hear, and feel, that we really only notice when there is a breakdown in the system.

As you design for XR, you are designing a sensory experience, with information and feedback available from multiple modalities, engaging multiple senses at once. If the balance between the different sensory inputs is off, then the user will likely react to it. This is the same reaction a child has when they have to walk around their bedroom in the dark. Without being able to see the room as they usually do, everything looks scarier, they question every sound, and as they feel their way through the dark, they jump at any unexpected thing they feel under their feet. This could be your user’s experience in the new unknown world you invite them to enter. However, if you use the physical world as inspiration for how certain senses are engaged and mimic these in an immersive experience, that balance will provide comfort to the user. The key is making an emotional connection, one that the user may not even realize is happening. Creating something with these visceral qualities is easier said than done. To do so, you have to understand how our senses work so you can successfully design for them. This does not mean just knowing what our senses are, but rather our perception of our senses and how they work together.

Visual

The first sense that you may think of, especially as a designer, is our sense of sight. This is our visual ability to interpret the surrounding environment and is reliant on perceiving the waves of light that reflect off objects in order to see them. This is also how we see color, the visual spectrum also referred to as the human color space. It is important to understand how our visual perception influences our immersive experiences. In Chapter 8, “Human Factors,” we discuss further how our brains perceive the elements in a design and in an environment. But for the moment, consider the user’s point of view and feeling of presence—the sense that they are actually in the space they see—both have an important impact on their relationship with an experience. In VR, you want the user to believe they are in the virtual world they have entered. If that world defies gravity, then all the visuals should reflect that. If you want to show what it looks like to have a migraine, as Excedrin did in a VR ad campaign, then having a first-person point of view is essential to see what someone might see and experience with a migraine. If you want someone to be able to walk through a historical place, that may be best experienced from a second-person viewpoint, where the story can be told to you. You want to choose the point of view that makes the most sense for the content.

Another visual consideration is where the viewer is looking. Gaze input allows a scene to react to where the user looks. This can customize the experience, but can also help to optimize the amount of processing power the experience needs. If you know what a user can see, then you can refresh that view at a faster rate than the rest of the scene to help optimize the use of memory on a device. It can also be an opportunity to have interactive elements stand out in a way that helps to guide the user to the next step in the experience. To understand where a user is looking, eye-tracking sensors are installed on many head-mounted displays. These can assist the user with conscious and unconscious interactions and movements. With a 360-degree experience, there can be times when a user is looking or facing the “wrong” direction. If you know where the user is looking and where they are supposed to be looking instead, then you can create a visual to guide them, with a path or arrow, for example, to the view that enables them to continue.

Visual perception is multifaceted and an essential foundation of all design, so we will continue to talk about the best practices and tips that you can use to create robust visual experiences for users. These are just the main components that you need to understand for now, as they relate to creating a multimodal experience.

Auditory

Sound design is a skill that is more and more essential to the designers of today. A good understanding of best audio practices, even understanding optimal sound levels, is a great skill that can be used in many different kinds of design. From interaction design to motion design, and of course XR, sound plays an important role in storytelling and the experience. Again, it isn’t just that you want to include sound. It is important to understand our ability to perceive sounds. Part of the ear, called the cochlea, detects vibrations and then processes these sound waves into a more readable format, brain signals.

Sound plays an important role in how we understand our environment, especially as we move through a space. Our proximity to a sound changes the experience of it. If you hear something that is loud from far away, you can tell both that it is loud and that it is coming from a distance. Sounds change as we move. Sound that mimics the audio in our physical world has to be spatial as well. Sounds cannot just be coming from one direction. If you have ever watched a movie in surround sound versus having just two speakers on either side of the screen, then you know the emotional impact of this difference. With sound surrounding you, you feel as if you are there in the same scene as the characters of the film. In XR we will use ambisonics to create and share 3D sound. There is so much to discuss and explore around this topic that we will be spending all of Chapter 12, “Sound Design,” diving into how to design a full auditory experience.

Olfactory

Smell is something that holds strong memories. Think of the smell of spring rain, or the cologne or perfume of someone you are close to. Even our own homes have distinct smells: We associate certain smells with people we visit often. Although smell may not be something that you think of when you consider digital experiences, it is something that some in the field are researching and have begun testing.

Understanding the powerful connection of smell and experiences, the Imagineering Institute in Nusajaya, Malaysia, has created 10 digital scents including fruity, woody, and minty. The research in this area has proven beneficial. The activation of our senses enhances the full immersion of an experience, specifically for VR and AR, and our sense of smell has a large role in that. While this technology is nowhere near ready to be used, the Imagineering Institute’s research is important because of the potential digital scents have for XR. It is plausible that in the future, using AR, you might be able to see and smell a restaurant’s dish from home before you decide to order takeout.

Haptic

One of the downsides of touch screens is that they are so smooth and flat; they don’t offer any tactile feedback to the user. To help overcome this challenge, haptic technology was invented to re-create the feeling of touch through the use of vibration or motion feedback. The term haptic refers to nonverbal communication, such as a handshake, high-five, or fist bump in our physical world. Can you think of any interactions on a smartphone that provide a haptic response? You may remember feeling a vibration when you delete something or when you selected something incorrectly. It is more common for us to remember the feedback that had a negative connotation, as the vibration is typically stronger. Digital haptics reinforce an action, the cause, that make a reaction, or effect, occur. Not all haptics are the same. The intensity of the haptic should reflect the action itself, and if done right, the user may not even realize that the haptic occurred.

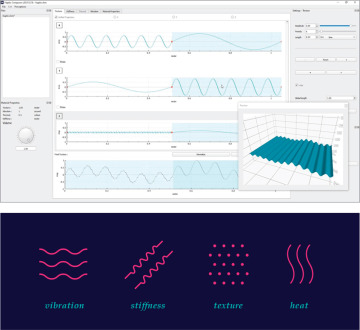

Depending on the software and devices you are designing for, you may need to use specific software to design this haptic feedback. For example, Interhaptics offers a pair of applications to help create realistic interactions (Interaction Builder) and haptics (Haptic Composer) for XR. With Haptic Composer, you can create haptic material visually (FIGURE 3.15). For example, if you turn on the flashlight on an iPhone, there is a haptic response. (Go check it out now, if you don’t believe me.) You may not remember this, but when you are aware of it and pay attention, you will start to see how many places you receive this touch response in your interactions. The goal is to re-create experiences in the digital world to work similarly to our physical world, so if done right, haptics aid so well in communicating that they become natural. They use a combination of touch and also proprioception, which we will explore next.

FIGURE 3.15 Haptic Composer from Interhaptics. To design haptics, you create an asset (haptic material) within the Haptic Composer, which stores the representation of haptics in four perceptions (vibration, textures, stiffness, and thermal conductivity).

Images: Interhaptics

Haptics in XR are typically found in hand controllers, which can provide subtle feedback to the user that they have moved digital objects successfully. If you are designing for mobile AR, you can use the haptic technology already built into the phone. This sense of haptic response has been built into game controllers such as Sony’s PlayStation 5, which is pushing the boundaries of what is possible in haptic design to create responsive sensations for the players.

Though this feedback can enhance the overall experience, it can cause some problems for the users that you will want to consider. For example, suppose you are using mobile AR and your experience is using video capture, sound capture, and possibly the internal gyroscope. In this situation, the shake of the haptics could provide unwanted, excessive feedback. If a phone is handheld, most of the vibration is absorbed with the hand, but the sound and motion could still be picked up in the video recording. If the phone is on a hard surface, then the haptic sound might be much louder. This is why you may feel the feedback when you select the camera on your phone, but not when you take a photo or tap Record.

It is important to use haptics cohesively. You want to make sure that the level of vibration matches the motion, but you also want to make sure that similar interactions receive the same level of feedback. This will communicate the similarity of the actions to the user, just as designing buttons in the same color and size groups them together visually. People will start to connect more intense feedback as negative, so every time you use that somewhere else, they will think that they have done something incorrect.

Communication requires an exchange of meaning, and even though this is a nonverbal form, haptics still communicate messages to the user. Using this technology can go a long way in providing instant feedback to the user about their actions.

Proprioceptic and kinesthesic

Close your eyes, and try to touch your index finger to your thumb. Try it on both hands. If you don’t see your fingers, how can they find one another? They can find each other because of proprioception, the sense from within. Often referred to as the sixth sense, the perception of the user’s position is an essential component for immersive experiences. It is one thing to be looking at a digital environment, but it brings it to a much higher level if you react to the changes in the environment as if you are there.

As you put on an HMD, you will be relying on your internal senses to help you use a hand controller, make hand gestures, and move—even while you can’t see your physical body.

In addition to proprioception, our kinesthetic sense also doesn’t rely on information from the five senses, and these two work together in sequence. Once you have an awareness of where your body is, thanks to proprioception, the kinesthetic sense helps you to actually move. If you can feel where your feet are, you use kinesthesia to walk, run, or dance.

It is important to know that our bodies are using more than just our five main senses in an immersive experience. It isn’t imperative that you understand how proprioception works, but it is important to understand what it is. You need to be able to use these senses to your advantage as you design to ensure that people can actually interact with an environment and move through it, even if they are not able to see their hands and feet.

It all makes sense

Creating a multimodal experience truly makes sense. (Get it?) In XR, multimodal, or multi-sensory, is the combination of visual, auditory, tactile, sometimes even smell, and proprioceptors to create a fully immersive experience. Multiple modalities are used effectively within many different industries, from transportation to education. If you listen to a podcast, you can hear someone talking, a single modality, but you don’t see their body language as you would in an in-person lecture or even a virtual webinar. If an in-person speaker uses visuals as they speak, then they are using a variety of modes to communicate the message. The words that they speak, the tone of their voice, their body language, and the visuals they show all work together to help you, as the viewer, comprehend a message. The wider the variety of modes that are used, the more ways that you can connect and engage with the information being shared, both verbally and nonverbally, and the more engaged and connected you will become with the experience. If someone talks about a story while showing you an image that you find disturbing in any way, your whole body will react to it. The level of connection to the experience will change the user’s response too.

Our somatosensory perception translates all these stimuli and combines them to define what the body senses, and therefore knows, on a multidimensional level. This helps you understand what is happening around you and inside you at the same time. All of this happens within the parietal lobe in the back of the brain, which helps us combine our senses to understand spatial information. I know that’s a lot of brain science, but now you know why our perception works the way it does. To simplify matters, just remember: The more senses you stimulate in an experience, the better the user will understand the space and the more emotionally connected they will be to it.

As we create XR experiences, we look to our real-world experiences to inform the virtual and augmented worlds. In our physical spaces, communication is multimodal. You can read an emotion of someone from across the room just from looking at their facial expressions. You can show someone where something is by pointing in a direction or can reinforce instructions you are speaking by using gestures. Communication uses a wide range of input modalities, so you want to include these in your digital worlds too.

Accessibility

Just as shortsighted as it would be to have the office for disabilities services on the second floor of a building without an elevator (yes, this has actually happened), it would be equally crazy to not use opportunities to engage a variety of senses in your experience to make it more accessible.

When it comes to accessibility in XR experiences, doing nothing isn’t an option. The truth is that a little can go a long way. The subtle vibration of a device can provide great feedback to the user without needing to make any sound. This benefits those who are hard of hearing or deaf, but also those who have the sound turned off because they are in a public space. It is important to understand that people will need different accommodations for different reasons throughout the day, at different locations, and even throughout different times of their life. Those with any of the many varieties of cognitive, physical, and psychological challenges can benefit from an experience that engages more than sense. Our needs may vary from a permanent disability to a temporary challenge created by a specific environment. If there is any part of the experience that can only be understood through hearing, then you could be excluding part of your audience. One way to help make the experience individualized to accommodate the needs of each user is to offer a setting that can be adjusted at any point in the experience. There may be times when a user may be in a louder physical space and might elect to have closed captioning turned on during that time. Or if a person would like to always have captioning on, they can do that too. But if you don’t include this as an option, you are excluding people from using the experience.

As you work to create multimodal experiences, consider how the user experiences each part of it. If there are any areas that are too reliant on only one input type, then that is an opportunity to expand the sensory experience to make it a more immersive experience and more accessible to the wide needs of users. This is just the beginning of this very important topic. Check out Chapter 6, “The UX of XR,” to see how you can make reality more accessible with your design choices.