Surveys

One primary way you can understand more about users and empathize with them is to hear their stories, their thoughts, their wants and needs by asking them questions about their opinions and experiences. Surveys are a perfect tool for us to do so. In this section, we’ll cover surveys—what they are, how to write them, and how to use them in the empathize step of the design thinking process.

What Is a Survey?

In the context of design, a UX research survey is a set of questions sent to a targeted group of users that probes their attitudes and preferences. Users are asked questions in a form, and those questions help us understand information about our product, an industry, or users’ attitudes toward something (remember that surveys are attitudinal in nature, as opposed to behavioral).

You use research surveys for two purposes:

To gain information about users (qualitative or quantitative)

To recruit potential users for research (user interviews, usability studies, and so on)

For both purposes, surveys can be very valuable as a scalable form of research. It’s difficult to invest time talking to each user and asking them questions; with surveys, you can send a link to a large number of people and get a lot of data quickly with low effort. There’s an initial investment, but it pays dividends once you do the work to create the survey.

To make a survey, and to make it well, you need to consider the steps to conducting survey-based research.

Start with an Objective

Surveys begin with a research objective. What is the purpose of the survey? Why do you need to use this research method to obtain the information (or users) you’re looking for? What do you hope to gain by opening a survey? And how will you analyze your responses? You need a research plan so you’re well prepared for recruiting participants and analyzing your results.

Ask Questions Around the Objective

Once you know the point of your study, you can ask questions around it. Your questions should be focused on that objective—don’t ask questions that do not directly support your objective.

For example, if you are trying to learn about ride-share app habits, do you need to know the average annual income of your users? Do you need to know their age? Or their ethnicity? You may be tempted to ask questions you think would help you build a profile of your users, but each question you ask makes it harder for your users to complete your survey. Stick to the crucial questions that support your objective.

Recruit Participants

Once your survey has a good structure, you can research people for your study. How will you find participants? Do you have an email list you can send a link through? Do you have a product with the ability to survey your users within it? Do you have any company (or personal) social media accounts that you could post to? Any of these methods could be appropriate for your research—the purpose is to get participants, ideally ones who are your target users, though sometimes you can be a little more relaxed about who you send surveys to (such as for a usability study).

Analyze Responses

Once you’ve sent your survey and received enough responses, you can use those responses as a data set. Some survey providers, like Google Forms, provide data visualizations for each question you ask, which you can use to form opinions about your users and better empathize. These responses can inform your opinion of who you want to design for, influence your ideation, and even help you determine certain people to interview.

Reach Out to Key Participants

As you look at your responses, you may find certain participants who would be great candidates to follow up with for an interview. You can contact these respondents and ask if they would be open to speaking with you about some additional questions. In fact, there is a subset of surveys, called screener surveys, that are designed to filter for appropriate candidates to do individual research with (like a user interview).

With a good understanding of how to structure a survey, let’s look at how you can structure questions.

Survey Question Types

Broadly speaking, a survey can have two types of questions:

Closed-response questions

Open-response questions

Closed-Response Questions

Closed-response questions are those that offer users a limited number of possible answers. The set of possible answers is “closed” and is defined by you, the researcher.

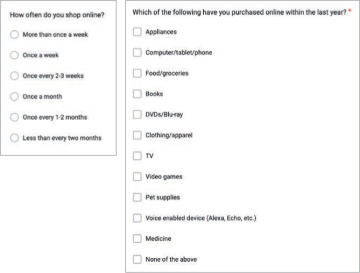

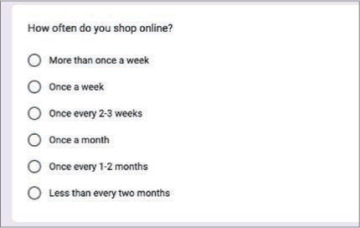

The example questions in FIGURE 2.13 are closed. The two questions have a set number of response possibilities, all of which were written and decided by the researcher. In this example, the researcher is seeking a specific information point about participants and is using these questions to filter users into categories around that point. For example, this researcher may want to see the patterns of people who shop online “often.” In that case, they may want to look only at responses from those who shop online once a month. If that’s the case, the researcher would filter out responses from users who select the responses that are longer than one month.

FIGURE 2.13 Two examples of closed questions. Closed questions can be single response (radio buttons) or multiple response (check boxes).

Closed-response questions are quantitative in nature. They lack context, motivation, or cause, but they are easy to visualize and simple for participants to answer (they click a button rather than write a response).

Closed-response questions are usually easy to identify—they have check boxes or radio buttons and are usually multiple choice.

Open-Response Questions

The opposite of the closed-response question is the open-response question, for which users can provide whatever answer they feel is appropriate. The set of possible answers is open and is defined by your users, who provide answers in the words they think are best.

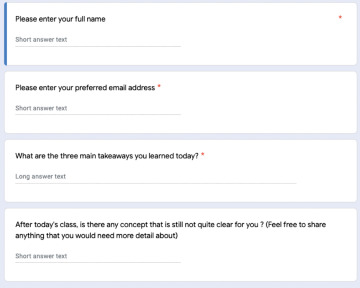

The example questions in FIGURE 2.14 are “open,” as they allow users to provide whatever answer they feel is appropriate. Here, the researcher is a teacher looking to learn what the class understood from the lesson. The teacher has no way of knowing what students specifically learned and, as a result, needs them to say what they understood. Here, the teacher is seeking feedback from students, and it’s up to the students to provide that feedback. The teacher would use the results of the survey to better adjust educational materials, or revisit concepts in class that a lot of students say they didn’t understand.

FIGURE 2.14 Several open-response questions that ask the user to input information rather than select from a set of predetermined answers.

Open-response questions are qualitative in nature. Answer responses contain behavior around an action, or how a user thinks about a problem. They’re excellent at getting users to describe the situation in their own words, which flows nicely into persona work or advocating for users when building your product. But because the answers aren’t organized into a system (like they are with closed questions), they take a lot longer to analyze and are harder to sort.

You can recognize an open-response question in a survey by looking for text boxes. These input fields are the primary way researchers structure open-response questions.

Survey Best Practices

When writing your own surveys, you should keep in mind some best practices to ensure you get quality and quantity responses.

Ask “Easy” Questions

Users will need to navigate your entire survey. If you ask complicated questions, or ones that are long or have a lot of responses to choose from, you are at risk of overwhelming your users. Questions should be easy to understand and easy to answer.

Ask “Neutral” Questions

Questions should be asked in a neutral way, to avoid assuming an answer or introducing bias. If you ask, “What is great about our product?” you are assuming your product is great (users might not think so). If you instead ask, “What do you think about our product?” you remove that bias and will get a different quality to the responses.

Cover All Conditions

When structuring closed-response questions, you need to think through the logic of those questions. Make sure you have every answer choice in your set of responses, or else you may alienate users who don’t fit into your choices.

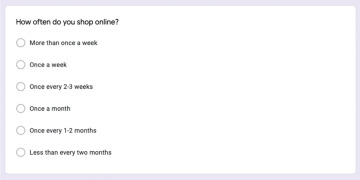

Let’s revisit the closed-response question from before (FIGURE 2.15). Think about our question with the last answer choice removed. If you did so, the survey question would be comprehensive but would miss out on any user who doesn’t shop online every two months. Perhaps you don’t want to know more about that user, but you should still include that option as a response.

FIGURE 2.15 When using closed-response questions, make sure every logical possibility is covered. What if a respondent shops online every day? What if they never shop online? We’ve covered all logical possibilities by structuring the question in this way.

Keep It Short

Be mindful of how long your survey is. The longer your survey, the lower your completion rates will be. Write shorter surveys to have better completion rates. This is why it’s crucial to ask the most important, most relevant questions for what you’re trying to learn. Even a single wasted question will affect how many people complete your survey.

Show Progress

If you can, use a tool that allows users to view their progress when taking the survey. Knowing how long a survey will take will improve your completion rates for users who begin your survey, as users will have an understanding of how much time they must spend on it. Tools like Google Forms, Qualtrics, and Typeform are good at displaying completion rates for respondents as they fill out the survey.

Test It

As with all your designs, you should test your survey before inviting users to complete it. As you should for a prototype, test your survey internally or with a small segment of people before sharing it widely with the public. Does the survey behave as you expect it to? Did you leave out crucial questions? Do some questions feel incorrectly written? This is the perfect time to make sure what you wrote works.

Survey Bias

When you’re creating and distributing surveys, be mindful of introducing bias. Bias can adjust the quality of responses and result in you drawing conclusions from research that are incorrect or don’t accurately represent the users. There are various forms of bias—how you write your questions, how you distribute your surveys, and how you interpret your results.

Leading Questions

Priming occurs when you write questions in a way that leads users to an answer choice. Imagine you list all your website’s service options in a question and ask users which ones they use. Then in the next question, you ask users if your website is robust and has a lot of options. You’re introducing bias in this test—you just showed users all your options and then asked if you have a lot of options. Instead, flip the order of these questions—first ask if users think you have a lot of options, then ask which options they use. It may look like a small change, but it’s important to avoid that type of bias in your study.

Double-Barreled Questions

Double-barreled questions ask two things at the same time. Each question should elicit a single response to make it clearer and easier for your users and to avoid bundling answers together. For example, if you ask, “Do you want to buy jeans and a T-shirt?” you exclude users who want to buy one of those items but not both.

Undercoverage

Undercoverage occurs when you send out a survey and miss a percentage of the population in the results. For example, think of telephone surveys. It’s common to survey people’s opinions of political candidates by calling landlines and questioning the people who respond. This technique, however, misses any person who doesn’t have a landline or who screens their phone calls.

To avoid undercoverage, try to send surveys to multiple locations.

Nonresponse

Nonresponse bias occurs when you send out a survey and the people who choose to complete it are meaningfully different from the people who don’t. Perhaps the people who are inclined to complete the survey have a different personality type or set of opinions, which would affect the results meaningfully. Consider census data, for example, where non-respondents may be in a different economic situation than respondents. Or perhaps they could be digital nomads, traveling from city to city without a permanent address or one that they check often.

It’s hard to avoid all types of bias. But being aware of them will allow you to best structure your surveys and to best search for people to take them.

Recruiting Participants

Once the survey is ready to go and you are confident about its structure and content, you can begin to find people to talk with. There are many methods of recruitment—some more feasible than others, depending on your working situation.

Current Users

One of the best ways to get feedback about your product is to ask the people who currently use it. They have the most context and are the most invested in your product to provide quality feedback about it. You can find these users by putting links in your product to surveys or emailing your users through the product itself.

Social Media

Social media is the best way to get feedback for your personal projects or if you have a limited research budget. Posting your survey on LinkedIn, through Slack or Discord groups, or through other social media channels can get participants quite easily. The quality of these participants is uncertain, however, since you lack the context of their experience with your product (and you don’t know if they even fit into your user demographics). This is a method that’s valuable for fast and easy research, however, and one that is common among students especially.

Recruitment Services

If you have an extensive research budget, this option can help ensure quality participants. Services like Maze, UserTesting.com, and more can find users for you based on a set of parameters you provide. You can filter by age, income, product usage, or professional industry to target more specific users. This also allows you to avoid asking these types of questions in the survey itself, which gives you more time to ask the questions you really want to know the answers to.

Online Advertisements

As with the social media approach, you can take out ads asking for users to complete your surveys. Sometimes paired with compensation, this method is the widest-reaching one for finding users—which puts the quality of your survey at risk. Still, it’s a possible method that research teams with the budget to do so employ.

Survey Example

With all this in mind, let’s look at an example survey created for a student project to understand user opinions regarding electronics e-commerce.

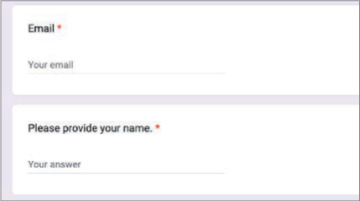

The survey begins with some simple logistical information: name and email (FIGURE 2.16). These pieces of information are relevant for us to reach out to this individual should we want to conduct an interview.

FIGURE 2.16 The first two questions of our survey. They’re easy, not too commit-tal, and logistically important.

Next, we ask about online shopping behavior—here (FIGURE 2.17), we want to filter for people who shop online often, as they are the target audience and more familiar with online shopping.

FIGURE 2.17 A closed, single-response question.

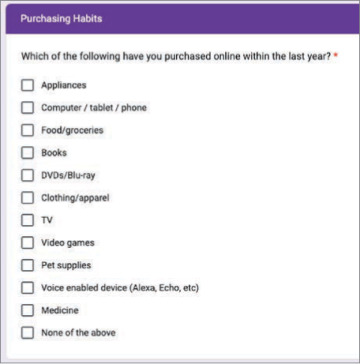

Next, we ask about purchasing habits (FIGURE 2.18). We want to avoid priming users or leading them to a specific response, so even though we care only about electronics purchases, we add other types of goods here as well to avoid that type of bias. We are most interested in the electronics purchases, such as a computer or TV.

FIGURE 2.18 A closed, multiple-response question.

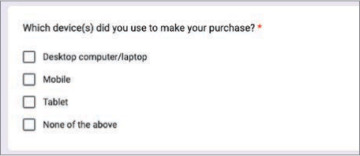

Next, we ask about the device used to make the purchase (FIGURE 2.19). We care mostly about desktop devices for the design problem, so we are trying to filter for that device.

FIGURE 2.19 Another closed, multiple-response question.

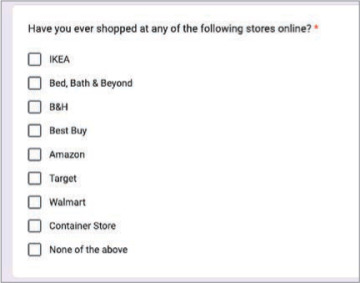

Next, we ask about the stores (FIGURE 2.20) used to make the purchase. In this project, we are trying to redesign Best Buy’s website, so we hope to speak to users who have used that site before. However, we’re still open to talk with people who use other sites, so it all depends on how users respond to this question.

FIGURE 2.20 A closed, multiple-response question to determine the stores where the respondent makes purchases.

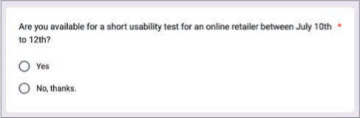

Lastly, we want to know whether the user is open to conducting a usability test with us during a certain time range—we had a tight research timeline for this project and needed to talk with people immediately. We prescreen for users willing to speak to us (FIGURE 2.21) so we don’t waste time asking them and waiting for a response from someone uninterested.

FIGURE 2.21 A closed, single-response question to determine the receptiveness to an interview.

This survey was sent out via social media, and after 30 participants took it, we found enough people for us to talk with directly for our research.

Using methods like these will enable you to conduct your own research, no matter the problem you are trying to solve.

Let’s Do It!

Now that we’ve covered what makes a good survey, let’s make one for ourselves! Remember that the problem space you want to apply design thinking to is solo travel—what can you do to encourage or otherwise support solo travel? You want to enrich and improve the lives of solo travelers.

To do so, you need to understand more about the solo traveler. Who are they? What do they want? What do they need? What are their goals?

To do this, you need to find solo travelers and talk with them.

To find solo travelers, one method to use is the survey. If you create a survey and distribute it to the public, you can find solo travelers to speak with and ask them about their experiences.

Your task? Create a screener survey for solo travelers. To do this, keep the tenets of good survey design in mind:

Be neutral. Don’t lead users toward one answer or another.

Be short. Don’t ask too many questions, or people won’t fill out your survey.

Ask easy questions. Survey respondents shouldn’t have to think too hard when answering questions, and they shouldn’t be writing essays. Save the harder questions for the actual interviews.

Remember, you are screening for participants. The survey should be designed to find great people to talk with, not to answer all your questions.

You can use whatever platform you like, but I recommend Google Forms for simplicity, cost, and the ability to share with others.